“Vague but exciting”

These were the words that Mike Sendall, Tim Berners Lee’s supervisor, wrote in his proposal regarding a new information management system for CERN. This was back in March 1989, and the world would never be the same again.

The problem he was trying to solve was surprisingly modern judging by today’s standards. The researchers at CERN were dealing with the typical issues that large and complex projects often face: turnover rate increases, project information and specifications change, people, documents and resources become difficult to track and update.

Tim Berners Lee proposed a “Linked information system”, borrowing the term “Hypertext” from Ted Nelson; another computer scientist and contemporary of his.

This “Hypertext” system would not be based on a structure of rigid hierarchy but would use notes with links (references). This was akin to how people use diagrams with points and arrows to talk about complex non-linear systems. Points would represent people, software modules, concepts, hardware, etc while arrows would indicate relationships such as depends on, is part of, refers to, and so on.

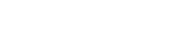

Perhaps the most iconic photograph of Sir Tim Berners Lee at CERN.

Perhaps the most iconic photograph of Sir Tim Berners Lee at CERN.

Two applications that changed the world

Tim tried to embody all these concepts into a language that could be used to represent hypertext. There had already been a hodgepodge of languages and systems for publishing and displaying Hypertext content; as the concept had been familiar to the academia since the 1940s. However, all of them had one significant shortcoming: they could only use local files.

Two prominent examples were Hypercard and SGML. Hypercard was a Macintosh application that allowed a user to create “cards” with textual and graphical information. The user could navigate back and forth using buttons, and click on links to transport themselves into a different card. Users could even write small scripts that produced animations.

Try our Award-Winning WordPress Hosting today!

SGML on the other hand, is an ISO standard used to compose structured text with paragraphs, headings, lists and other elements. The nice thing about SGML is that it could be implemented on any machine, and was independent from the software used to display documents.

Tim based HTML off of SGML, which was clever, since SGML was at the time, a widely accepted standard. His unique addition was the hypertext link, combining the anchor A tag with the HREF attribute to point to resources hosted on different machines.

This meant that computers needed to be able to talk to each other and exchange messages. Of course, at that time, computer networks were a reality in CERN and the rest of the world. But they needed to be able to talk “Hypertext” and that called for a new communication protocol. A protocol is a set of agreed-upon standards for two or more computers to communicate with each other in a specific manner. Tim named this new protocol HTTP, which not surprisingly, means HyperText Transfer Protocol. The first incarnation of the HTTP protocol (version 0.9) implemented a few simple but fundamental operations such as request/response and connect/disconnect.

Tim wrote two keystone pieces of software; a web server that implemented the HTTP protocol, and a web browser that would read display HTML files as Hypertext documents. These two were bundled together and called WorldWideWeb (without spaces). Users could now install the web server in their workstation, and publicly export files and documents to other people. Using the web browser, they could in turn connect, and retrieve HTML documents from other people’s web servers using something called a Uniform Resource Locator (URL).

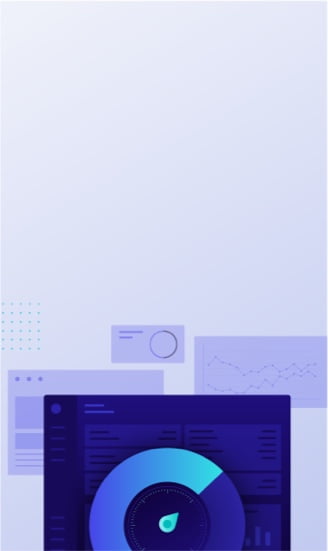

The first web server ever was Tim’s NeXT workstation at CERN (Wikimedia Commons)

“Vague but exciting… And now?”

The Web has come a long way since 1989 and the amount of change that has taken place over the past 27 years is mind boggling. Myriads of different fads, frameworks, languages and technologies quickly flourished and disappeared. Undoubtedly, the Web’s inflection point, was the advent of social media. Social media has entirely transformed our world, the way we communicate, do business, and have fun.

The Web’s humble beginnings can be easily forgotten, or set aside. Yet, even after 30 odd years of development/evolution, those with an eye for detail, perhaps some romantics or the digital archaeologists of the future, will notice that some parts of the Web have remained unchanged, simply because they work. Designing systems that are robust enough to function for decades and open enough to not become obsolete, is what this legacy is all about. One can become a better designer, software engineer, and dare we say even a better human being, by studying the works of old Internet wizards. Join us in the next post where we’ll discuss what really happens when you type a URL in your browser, have an in-depth talk about modern web servers, current languages and the latest incarnation of the Hypertext protocol, HTTP/2!

Start Your 14 Day Free Trial

Try our award winning WordPress Hosting!